Artificial intelligence (AI) is increasingly woven into students’ lives — from classroom tools and search engines to the platforms that shape their social worlds. For educators, this raises an urgent question: how can schools ensure students engage with AI responsibly and critically, rather than passively adopting whatever new tool appears next?

In a recent Harvard EdCast episode, Teaching Students to Think Critically About AI, education scholars Stephanie Smith Budhai (University of Delaware) and Marie Heath (Loyola University Maryland) discussed how to help young people navigate AI with awareness, equity, and purpose. Both are co-authors of Critical AI in K–12 Classrooms: A Practical Guide for Cultivating Justice and Joy (Harvard Education Press). Their work highlights how bias, values, and historical inequalities are often embedded within AI systems — and what educators can do to counteract them.

Key Takeaways:

- Acknowledge That AI Is Not Neutral: Budhai reminds educators that AI is “a piece of technology, not human, but also not neutral.” Its outputs reflect the assumptions, biases, and values of those who design and train it. Teachers can help students question who created a tool, what data it draws upon, and what purpose it serves.

- Move Beyond Excitement to Intentional Use: Educators are often quick to embrace new tools for their potential classroom benefits. Heath cautions that enthusiasm should be balanced with reflection. Schools should consider why and how AI is used, not simply adopt it because it is available. Intentional, critical use ensures technology serves learning goals rather than shaping them.

- Teach AI Literacy Through Inquiry and Reflection: Like media literacy, AI literacy begins with curiosity and questioning. Budhai and Heath suggest teaching students to audit technologies by asking who benefits, what trade-offs exist, and whose perspectives are represented. Such questioning cultivates deeper understanding and ethical awareness.

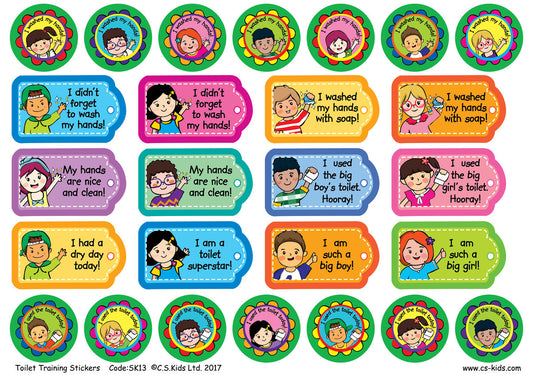

- Embed Equity and Culturally Responsive Pedagogy: Budhai stresses that AI must be examined through an equity lens. Educators can use culturally sustaining frameworks to ensure AI tools support, rather than disadvantage, diverse learners. For instance, Story AI — developed at UC Irvine — invites pupils to co-create stories with AI while examining how the technology portrays culture, identity, and representation. By revealing built-in biases (such as defaulting to certain appearances or settings), it helps learners discuss fairness, inclusion, and authorship in a tangible, age-appropriate way.

- Empower Students to Resist, Refuse, and Reclaim Technology: Not every digital tool deserves acceptance. Budhai and Heath encourage students to exercise agency — to question or even refuse technologies that misuse their data or reinforce inequality. “Refusing AI,” as they describe it, is part of cultivating democratic habits of choice and consent.

- Keep Students, Not Technology, at the Centre: Budhai concludes that educators should focus on the learner before the tool. Using the “SETT” model — Students, Environment, Tasks, Tools — teachers are reminded that technology should always come last. If AI genuinely enhances learning or accessibility, it can be adopted purposefully; if not, it is better left aside.

Helping students think critically about AI is not about teaching coding or keeping pace with technological change. It is about ensuring that young people understand the social and ethical dimensions of the technologies shaping their lives.

For educators, this means grounding classroom practice in intentionality, equity, and curiosity — asking not only what AI can do, but also what it should do, and for whom. When teachers and leaders model this kind of critical engagement, schools can become spaces where students learn to use AI wisely, ethically, and creatively.

For a deeper dive into the discussion, listen to the full Harvard EdCast episode: Teaching Students to Think Critically About AI.